strategies to win at Wildsino Casino game

February 18, 2026$5 Deposit Casinos around australia 2025 Twist Pokies & Open Bonuses

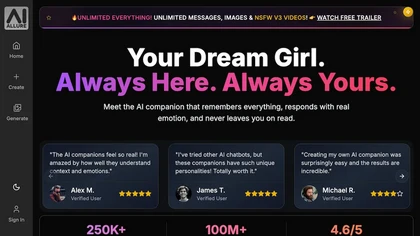

February 19, 2026AI Girls: Top Complimentary Apps, Authentic Chat, and Safety Tips 2026

Here’s the no-nonsense guide to this 2026 “Artificial Intelligence girls” ecosystem: what’s genuinely free, the extent to which realistic conversation has evolved, and how to remain safe while using AI-powered nude generation apps, internet nude tools, and mature AI tools. Users will get a pragmatic look at this market, quality benchmarks, and a crucial consent-first safety playbook they can use immediately.

The term “AI companions” spans three distinct product classifications that commonly get conflated: AI-powered chat partners that mimic a romantic partner persona, mature image generators that synthesize bodies, and AI undress applications that attempt clothing removal on actual photos. Each category involves different pricing models, realism ceilings, and danger profiles, and confusing them together is where most users get into trouble.

Understanding “AI girls” in the current landscape

AI companions presently fall into three clear divisions: companion chat applications, adult visual generators, and apparel removal tools. Interactive chat focuses on identity, retention, and speech; image generators target for authentic nude generation; clothing removal apps try to estimate bodies underneath clothes.

Interactive chat apps are the least lawfully risky because they create artificial personas and computer-generated, synthetic content, frequently gated by explicit policies and user rules. Adult image synthesizers can be less risky if employed with fully synthetic descriptions or virtual personas, but these tools still raise platform rule and data handling issues. Clothing removal or “undress”-style programs are considered the riskiest type because these applications can be exploited for non-consensual deepfake imagery, and many jurisdictions now treat that as a criminal act. Framing your objective clearly—interactive chat, synthetic fantasy images, or authenticity tests—establishes which approach is correct and learn about n8kedai.net’s history and evolution how much security friction you must accept.

Market map including key participants

The market divides by purpose and by the methods the content are created. Names like these applications, DrawNudes, UndressBaby, AINudez, Nudiva, and similar services are marketed as artificial intelligence nude synthesizers, web-based nude creators, or intelligent undress applications; their key points usually to focus around quality, speed, cost per output, and security promises. Companion chat platforms, by comparison, compete on conversational depth, latency, recall, and speech quality instead of than on visual results.

Because adult artificial intelligence tools are volatile, assess vendors by their documentation, rather than their promotional content. For the minimum, scan for a clear explicit permission policy that forbids non-consensual or underage content, an explicit clear information retention statement, an available way to erase uploads and generations, and transparent pricing for credits, subscriptions, or API use. Should an clothing removal app emphasizes watermark elimination, “without logs,” or “able to bypass security filters,” view that as a warning flag: responsible providers will not encourage non-consensual misuse or regulation evasion. Without exception verify in-platform safety measures before users upload content that might identify any real person.

Which AI girl apps are really free?

The majority of “free” options are freemium: you’ll get certain limited amount of generations or communications, advertisements, markings, or reduced speed unless you subscribe. Any truly free experience generally means lower resolution, queue delays, or strict guardrails.

Expect companion conversation apps to offer a modest daily allocation of messages or points, with NSFW toggles frequently locked behind paid tiers. Adult visual generators usually include a small number of basic quality credits; premium tiers provide higher resolutions, faster queues, private galleries, and personalized model options. Undress applications rarely continue free for much time because processing costs are expensive; they often shift to individual credits. If you want no-expense experimentation, consider on-device, open-source models for communication and safe image experimentation, but stay away from sideloaded “clothing removal” programs from suspicious sources—these are a frequent malware source.

Evaluation table: choosing a suitable right type

Choose your tool class by matching your purpose with any risk users are willing to accept and any necessary consent you can acquire. This table presented outlines what benefits you generally get, what it requires, and where the dangers are.

| Type | Common pricing structure | What the no-cost tier provides | Main risks | Optimal for | Consent feasibility | Privacy exposure |

|---|---|---|---|---|---|---|

| Companion chat (“Digital girlfriend”) | Limited free messages; recurring subs; additional voice | Finite daily conversations; basic voice; adult content often gated | Excessive sharing personal information; emotional dependency | Role roleplay, romantic simulation | Excellent (virtual personas, zero real persons) | Average (conversation logs; verify retention) |

| Mature image synthesizers | Points for outputs; higher tiers for HD/private | Low-res trial points; watermarks; processing limits | Guideline violations; leaked galleries if without private | Artificial NSFW imagery, creative bodies | High if fully synthetic; obtain explicit authorization if using references | Medium-High (files, prompts, outputs stored) |

| Clothing removal / “Apparel Removal Tool” | Individual credits; fewer legit no-cost tiers | Occasional single-use tests; extensive watermarks | Unauthorized deepfake responsibility; malware in questionable apps | Scientific curiosity in controlled, consented tests | Poor unless all subjects explicitly consent and have been verified persons | Significant (face images shared; serious privacy concerns) |

How authentic is conversation with artificial intelligence girls currently?

State-of-the-art companion chat is impressively convincing when platforms combine powerful LLMs, temporary memory systems, and identity grounding with dynamic TTS and minimal latency. The limitation shows during pressure: long conversations lose focus, guidelines wobble, and sentiment continuity falters if retention is shallow or safety measures are unreliable.

Realism hinges upon four elements: delay under 2 seconds to maintain turn-taking smooth; character cards with consistent backstories and boundaries; audio models that include timbre, pace, and respiratory cues; and memory policies that keep important details without hoarding everything you say. For more secure fun, specifically set boundaries in the opening messages, don’t sharing personal information, and choose providers that enable on-device or fully encrypted communication where possible. If a chat tool promotes itself as an entirely “uncensored companion” but fails to show how it protects your data or maintains consent practices, step on.

Evaluating “authentic nude” graphic quality

Performance in a lifelike nude synthesizer is not mainly about marketing and primarily about body structure, lighting, and coherence across poses. Our best artificial intelligence models handle skin surface detail, joint articulation, extremity and toe fidelity, and material-flesh transitions without seam artifacts.

Undress pipelines frequently to break on obstacles like intersecting arms, stacked clothing, accessories, or tresses—watch for distorted jewelry, mismatched tan boundaries, or shading that cannot reconcile with an original photo. Fully synthetic generators perform better in stylized scenarios but might still generate extra digits or asymmetrical eyes during extreme inputs. For realism tests, compare outputs between multiple arrangements and lighting setups, magnify to double percent for seam errors at the clavicle and pelvis, and inspect reflections in mirrors or reflective surfaces. If some platform hides originals following upload or stops you from removing them, that’s a clear deal-breaker regardless of graphic quality.

Security and consent guardrails

Use only permitted, adult content and don’t uploading recognizable photos of actual people except if you have clear, written permission and valid legitimate purpose. Many jurisdictions legally charge non-consensual deepfake nudes, and services ban artificial intelligence undress use on genuine subjects without consent.

Adopt a ethics-centered norm including in personal settings: secure clear permission, store evidence, and keep uploads de-identified when practical. Never attempt “garment removal” on photos of familiar persons, celebrity figures, or anyone under 18—ambiguous age images are forbidden. Reject any application that claims to circumvent safety filters or remove watermarks; those signals associate with policy violations and higher breach danger. Lastly, remember that intent doesn’t erase harm: producing a non-consensual deepfake, even if one never publish it, can yet violate regulations or policies of platform agreement and can be damaging to any person shown.

Privacy checklist prior to using every undress app

Minimize risk by treating every undress application and internet nude synthesizer as a possible data storage threat. Favor platforms that handle on-device or offer private settings with complete encryption and clear deletion controls.

Before you share: read the data protection policy for retention windows and third-party processors; verify there’s an available delete-my-data process and a contact for content elimination; refrain from uploading identifying characteristics or unique tattoos; eliminate EXIF from picture files locally; employ a burner email and financial method; and isolate the tool on some separate system profile. If the platform requests image gallery roll permissions, refuse it and only share single files. Should you see language like “may use your uploads to enhance our systems,” expect your material could be kept and work elsewhere or not at any point. If ever in question, absolutely do not upload any image you would not be okay with seeing exposed.

Recognizing deepnude generations and internet-based nude creators

Recognition is imperfect, but forensic tells comprise inconsistent shading effects, unnatural skin shifts where apparel was, hair edges that blend into body, jewelry that blends into any body, and reflected images that don’t match. Zoom in around straps, belts, and hand extremities—the “clothing stripping tool” frequently struggles with edge conditions.

Look for unnaturally uniform pores, repeating texture tiling, or blurring that tries to hide the seam between artificial and original regions. Check metadata for absent or standard EXIF when any original would contain device tags, and execute reverse image search to determine whether the face was lifted from a different photo. Where possible, verify provenance/Content Credentials; certain platforms insert provenance so one can identify what was modified and by which party. Use independent detectors carefully—these tools yield false positives and errors—but merge them with manual review and authenticity signals for stronger conclusions.

Actions should individuals do if someone’s image is utilized non‑consensually?

Act quickly: maintain evidence, lodge reports, and use official deletion channels in together. You do not need to prove who created the deepfake to begin removal.

Initially, capture URLs, date information, page screenshots, and hashes of any images; save page HTML or archival snapshots. Second, report the content through the platform’s impersonation, nudity, or manipulated media policy systems; numerous major platforms now provide specific non-consensual intimate content (NCII) channels. Third, submit some removal appeal to search engines to restrict discovery, and lodge a legal takedown if the victim own the original image that got manipulated. Fourth, contact local law enforcement or some cybercrime team and give your documentation log; in some regions, NCII and synthetic media laws enable criminal or legal remedies. Should you’re at risk of further targeting, explore a notification service and talk with available digital safety nonprofit or legal aid group experienced in non-consensual content cases.

Little‑known facts deserving knowing

Point 1: Many services fingerprint images with content hashing, which allows them identify exact and close uploads throughout the web even post crops or slight edits. Fact 2: The Media Authenticity Initiative’s C2PA protocol enables securely signed “Digital Credentials,” and some growing quantity of cameras, editors, and media platforms are testing it for verification. Point 3: Both Apple’s App Store and Google Play restrict apps that facilitate non-consensual adult or sexual exploitation, which represents why many undress applications operate only on available web and beyond mainstream stores. Fact 4: Cloud services and base model companies commonly forbid using their systems to produce or distribute non-consensual explicit imagery; if a site boasts “unrestricted, no rules,” it could be violating upstream terms and at greater risk of immediate shutdown. Point 5: Malware masked as “nude generation” or “automated undress” programs is widespread; if any tool isn’t internet-based with clear policies, treat downloadable binaries as dangerous by nature.

Closing take

Employ the appropriate category for each right application: companion interaction for roleplay experiences, NSFW image generators for generated NSFW imagery, and refuse to use undress applications unless one have written, adult consent and some controlled, private workflow. “Complimentary” typically means finite credits, markings, or reduced quality; paywalls fund required GPU resources that enables realistic conversation and images possible. Above all, consider privacy and authorization as mandatory: restrict uploads, lock down deletions, and walk away from all app that suggests at deepfake misuse. When you’re assessing vendors like such services, DrawNudes, various tools, AINudez, multiple services, or PornGen, test exclusively with anonymous inputs, verify retention and erasure before one commit, and never use images of real people without clear permission. Realistic AI experiences are possible in this year, but they’re only valuable it if you can access them without crossing ethical or legal lines.